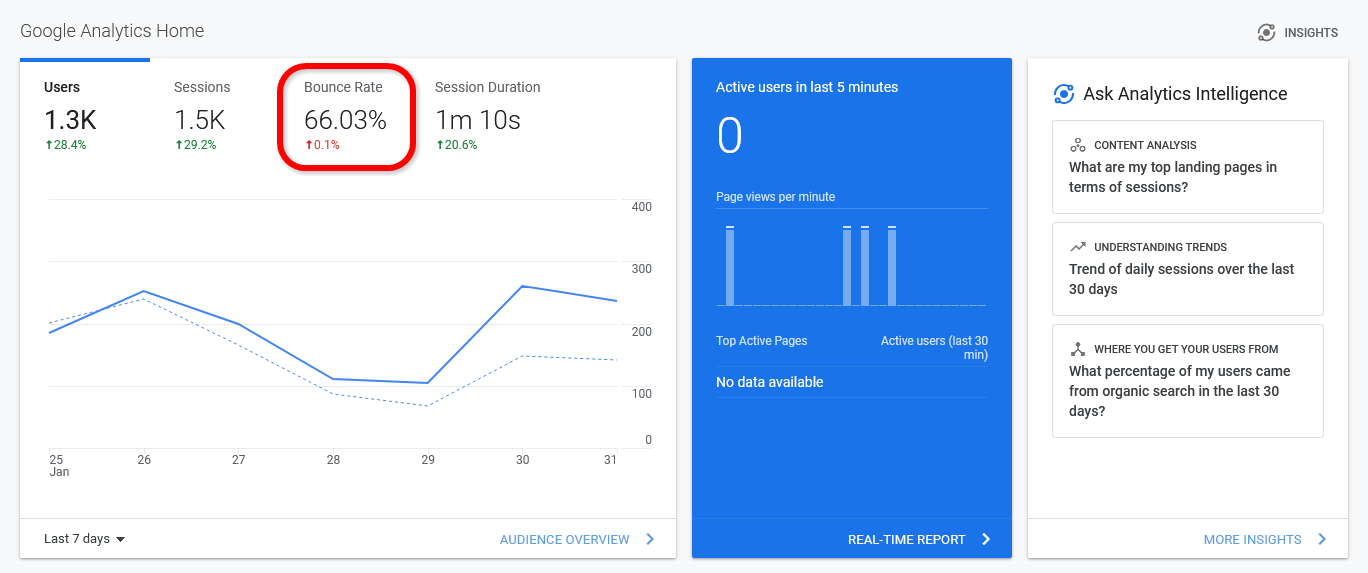

When you’re sifting through your website analytics, one of the most important metrics you’ll find is your bounce rate. Bounce rate refers to the rate of visitors that leave your website after a single page visit. This generally means that they didn’t find the page interesting enough to continue browsing your site, let alone buy anything from your business.

Entrepreneurs should always look for ways to lower the bounce rate of their web pages. After all, a lower bounce rate means that visitors are spending more time browsing your content and your online store, which will lead to more customer conversions and more sales. Fortunately, you can use many smart strategies to reduce your bounce rate and keep visitors around for longer. Here are 11 ways to lower your bounce rate and increase session duration.

Improving the loading speed of your website is one of the best things you can do. This simple change can almost instantly reduce your bounce rate, increase the average session duration of viewers, and enhance your search engine rankings. Of course, this will also have a positive impact on how people react to your website and how many viewers turn into customers.

Tricks such as compressing your content, minimizing HTTPS requests, and allowing asynchronous loading for certain files can help speed up your website. It’s also important to get high-quality hosting. However, to ensure that your website is as efficient as possible, you might want to ask a professional web developer to help you out. Even a 1-second increase in the average loading time can have a significant impact on session duration and conversions.

Another way to reduce your bounce rate and keep visitors around longer is to make your website easier to navigate. If people can’t instantly find what they need on your website, they’re likely to get frustrated and leave. As such, you’ll want to ensure that everything is easy to find and that potential customers have no problem finding what they’re looking for.

Many websites handle this by using large navigation buttons for important parts of their website, such as their online shop and their FAQ page. Providing internal links between pages to link people to things they might be interested in can also help. Asking people to test your website for usability can help you tackle potential problems and improve the ease of navigation.

Encountering a page with missing images or a “404 Not Found” message when clicking on a link is an immediate turnoff for many visitors. Errors like these make your site look unprofessional and unmaintained.

Use our broken link checker service to identify dead links and fix them before they affect your reputation and drive away potential customers.

Sometimes keeping visitors on your website is all about aesthetics. If someone visits your site and finds that it looks like a website from the 1990s, they’ll probably think that your business is old and outdated. Even though less is more sometimes, a visually unappealing website can cause visitors to swiftly leave.

While your website doesn’t need to be too ostentatious, a few visual upgrades and an attractive template can go a long way. You might even want to ask a web design service to help you make your website look as good as possible while still ensuring that it loads fast and is easy to navigate.

Using internal links throughout your website has all kinds of benefits. Internal links can help you improve your Google ranking for certain keywords, which will help you gain more visitors. What’s more, if you use internal linking appropriately, visitors are much more likely to click on these links and keep exploring your website, leading to a huge reduction in your bounce rate.

You should use internal links in your blog posts to link relevant keywords to other helpful pages on your website. You should also include a call-to-action (CTA) on each page that leads viewers to your online store. Adding internal links between relevant products in your online store can also help you keep users browsing and boost your customer conversions.

Adding some enticing interactive content to your blog posts is an excellent way to increase the average session duration of visitors to your website. After all, people will naturally stay on your website longer if they’re watching a video, doing a quiz, or exploring a fascinating interactive infographic.

These features can also help you reduce your bounce rate. When your blog posts offer engaging features like videos, quizzes, and infographics, people will get invested and read more of your content. You can even enhance your sales by using these interactive features to lead people to your online store and including some interactive content on your product pages.

One of the biggest causes of high bounce rates is websites that aren’t mobile-friendly. Many consumers nowadays use their smartphones, tablets, and other portable devices to browse the internet. If your website doesn’t cater to these devices, you’ll lose tons of visitors who would simply rather use a website they can read and browse on their phone.

Making your website more mobile-friendly involves enhancing your layout, making text readable on small devices, and breaking content into small paragraphs to make it easier to read. Once again, you might want to ask a professional web design service for help to make your website more mobile-friendly, especially as it’ll boost session duration and reduce your bounce rate.

If you want to turn more of your website viewers into customers, you need to make sure your online store is fun, appealing, and easy to navigate. The more time people spend browsing your online store, the more likely they are to ultimately buy from you. Naturally, this will also have a great impact on your bounce rate and session duration.

There are a few tricks you can use to keep people engaged in your online store. Adding image links to related products on every product page can catch the attention of viewers. You should also include high-quality product images and even product videos to demonstrate your products. You should make sure your product pages load fast and are easy to navigate on all devices. Including large “Buy Now” or “Add to Cart” buttons can also help boost sales.

Some websites blast visitors with unwanted pop-ups as soon as they visit. Between advertisements, pop-up boxes asking them to accept all cookies, and requests to sign up to an email list, visitors can become frustrated, and these features may cause them to instantly leave a website. As such, you’ll want to avoid them as much as possible.

While you need to ask visitors to accept cookies, you should do so with a small footer rather than a huge pop-up box. You should also avoid big, annoying ads in favor of organic links in your content. Instead of using pop-up boxes to ask people to join your mailing list or check out your products, add these CTAs to your blog pages or somewhere on your website where they’re less obtrusive.

Collecting feedback from website visitors is one of the best ways to improve your website. This helps you instantly discover and solve problems with your website usability. For instance, you might find out that mobile users find it hard to browse your website. You can then work on making your website easier to browse on portable devices.

By finding and tackling these problems, you can impress more viewers, resulting in a lower bounce rate and higher average session duration. You might want to send out feedback surveys to your customers to ask them how easy it was to use your website. You could also pay for usability testing, where impartial testers thoroughly test your website and give you tips on how to improve its usability.

Encouraging visitors to stick around longer on your product pages is one of the best things you can do. The more time they spend browsing your products, the more likely they are to ultimately buy something. As such, you’ll want to optimize your product pages as much as possible to prevent visitors from leaving.

High-quality images of your products can help. Offer pictures from every angle so customers can check out each product thoroughly. Product videos can also help, especially as these can make visitors invest a few minutes into discovering more about each product. Detailed product descriptions and product reviews can also keep people reading and entice them to make a purchase.

If you want to boost your Google search ranking and enhance your sales, lowering your bounce rate and increasing your average session duration can help. By focusing on these analytics and improving them, you’ll keep people around on your website much longer. This will result in a higher rate of customer conversions as well as a significant boost in future traffic.

These 11 strategies can help you significantly improve these analytics and enhance the success of your website. Not only can these tips help online businesses make more sales, but even if you’re not trying to sell anything, decreasing your bounce rate can help you bring more visitors to your site and build a bigger following.

If your website isn’t getting many views despite high-quality content, or if you’re seeing a steady drop in organic traffic, odds are that poor SEO practices are to blame. While plenty of great SEO companies and consultants are available to help you improve your page ranking factors, you might be surprised to learn how much you can do yourself with a minimal understanding of WordPress or HTML.

Before you spend any money on professional services, use this quick DIY audit guide to gauge the quality of your SEO.

SEO involves much more than just keywords. Site speed has been one of the most crucial factors for quite some time – most notably since Google introduced a dedicated page speed update back in 2018. If your site takes more than two seconds to load, it’s likely causing your page rankings to suffer. Data released directly by Google reveals that every extra second that your page takes to load dramatically increases bounce rate (the percentage of users who leave the site after viewing only one page).

Luckily, Google’s PageSpeed Insights tool can tell you exactly how long your loading times are, as well as point out specific areas for improvement so that you or your web developer can make the necessary changes to boost performance, improving both your SEO and user experience.

If your site isn’t optimized for mobile use, it’s time to make that a priority; the majority of online traffic is mobile, and that trend isn’t changing any time soon. In fact, Google now actually indexes the mobile version of websites first, so the lack of an adaptive, responsive, or mobile-first design that makes it easy for people to navigate on their devices will have a sizable negative impact on your ranking.

As with page speed, Google offers free tools to analyze mobile performance and fix any potential problems. Just use Google’s Mobile-Friendly Test to see how your site stacks up.

One of the most basic and most important checks you can run is to ensure that only a single version of your site is being indexed by Google. In an extreme case, search engines could see four different versions of your site:

While this makes no difference to a user browsing your site, it can cause big SEO problems by making it difficult for search engines to know which version to index and rank for query results. In many cases, separate versions of a site can even be interpreted as duplicate content, which further impacts your content’s visibility and rankings.

If you run a manual check and discover a mix of site versions, the easiest fix is to simply set up a 301 redirect on the “duplicate” versions to let search engines know which one to index and rank. Another option is to use a rel="canonical" tag on your individual web pages, which is just as effective as a 301 redirect but may require less time for you or your web developer to implement.

While your site’s performance is a major ranking factor, it’s still important to consider ways of improving your traditional on-page SEO. In addition to creating great, unique content that provides value to the user, you’ll want to look at optimizing title tags, meta descriptions, and image alt tags, as well as improving your internal linking and pulling any bad outbound links.

A number of great tools can help you find any problem areas or opportunities you have missed. Popular options that can quickly provide a list of actionable items include SE Ranking, SEO Tester, and SEMrush’s On-Page SEO Checker tool.

Another great SEO audit tool that focuses specifically on internal and outbound links is Dr. Link Check. It crawls your website and gives you a complete list of all its links. You can then filter that list down to show only the links you are interested in, such as broken links or dofollow links to external websites.

Backlinks are one of Google’s top three factors when determining page rankings, and they can be enormously beneficial when it comes to increasing traffic, as long as they’re legitimate, high-quality links. But there are also so-called “toxic” backlinks that can negatively impact your organic traffic and site rankings. In extreme cases, they can even result in a manual action being taken against your site by Google.

These harmful backlinks are usually the direct result of trying to game the system, whether it’s paying for links, joining shady private blog networks, submitting your site to low-quality directories, or blatantly spamming your links all over the web. While it’s an uncommon tactic, there are also cases of unscrupulous webmasters deliberately using toxic backlinks as a way to sabotage competitors. Even if you aren’t using any of these tactics yourself, it’s still worth doing a periodic check to make sure everything’s above board.

By using a combination of SEMrush’s Backlink Audit tool and the Google Search Console, you can determine whether you have any backlink issues to address. If you do find any problematic backlinks, the two main options are sending out removal requests via email and hoping they get removed, or using the Google Disavow Tool to tell Google that you want them to ignore certain links.

Keep in mind that the point of a DIY SEO audit is to conduct some simple checks that anyone who knows their way around WordPress or basic HTML can handle. If you’ve done everything on this list and still seem to be struggling to gain traction, or if you’re seeing a steady drop in organic traffic, the problem might be something more complex.

In that case, it’s important to know the limits of your own abilities. If you start poking around in code that you aren’t familiar with, there’s a very real risk of doing more harm than good. Hiring someone to fix mistakes is a costly headache that nobody wants to deal with. Instead, consider hiring an SEO specialist with more in-depth knowledge on the subject as soon as you know the problem is something you’re not sure how to fix. The good news is that if you’ve already done a basic audit, that means less work (and billable hours) that a professional needs to do before they can diagnose the issues.

While professional assistance is certainly necessary in some cases, the truth is that many of the most common SEO mistakes can be corrected without much technical know-how, thanks to the robust analytical tools and guidelines available. So before you spend your hard-earned money, walk through the steps outlined in this guide to identify your issues and see if it’s something that can be handled with a few minutes of your or your web developer’s time.

Many users hit the back button within seconds of loading a web page, so it’s no surprise that lots of websites are primarily designed to look nice. Humans are inherently visual creatures, but that doesn’t mean that users ignore poor design just because it looks pretty. An ugly website that works great might turn away most visitors, but a pretty website that doesn’t work well won’t keep anyone around. To create a successful website, it’s critical to not just make it look good, but also ensure that it’s easy to use, fast, secure, and in compliance with every applicable law.

Users probably landed on your website for a reason. Maybe they were interested in purchasing your product; maybe they needed to call your customer support. Regardless, your website should make it easy for them to find what they’re looking for. Navigation should be intuitive, clear, and efficient so that visitors don’t get frustrated and leave. If you want to encourage visitors to do something (like sign up for your service), include a clear call to action on the relevant pages.

Your site has to work well on every device a potential customer might be using. Mobile-friendliness is a basic expectation in today’s world. Accessibility and internationalization aren’t nearly as hard as they sound, and they make a huge difference for users who aren’t from your country and those with disabilities.

Professionalism is also a big part of usability. Not proofreading content is a surefire way to lose anyone who reads carefully. Broken links are a more subtle issue, but they can tarnish your reputation and users’ trust when a link goes somewhere unexpected. Periodically clicking every single link on your website is a waste of time, so services like Dr. Link Check make it easy to avoid giving visitors an unwelcome surprise.

Even the most easy-to-use website won’t attract any customers if it isn’t ranked highly by search engines. Following basic SEO guidelines will significantly boost the number of hits your site receives. Simple tweaks like making sure to implement <meta> tags on every page, using expressive <title> elements, and putting descriptive text in alt attributes on images makes it much easier for search engines to index and rank your pages.

Instead of manually adjusting the HTML on every page, your CMS or static site generator should offer some configuration options to automate these fixes. Additionally, a machine-readable XML sitemap is an easy automated addition that will help crawlers find every page you host on your site.

People are impatient when it comes to waiting around for web pages to load. Google found that 53% of visitors left when a page took more than three seconds to load. Making sure your website is fast (especially on mobile devices and connections) is an important and relatively easy way to significantly reduce the number of people who hit the back button before seeing any content.

Assets like images, JavaScript, and CSS files are some of the largest files your visitors will have to load from your site. In the case of images, be sure to downsize and compress them appropriately. For scripts and stylesheets, don’t forget to minify them. If page load times are still too high, try using a CDN, which will cache these large files near your users. Also, a frequently forgotten source of slow load times, if you’re using a CMS like WordPress, is unnecessary plugins. Aside from resulting in longer render times, plugins can sometimes inject additional JavaScript and CSS that your users have to download.

Not using HTTPS is like publicly advertising that you don’t wear a seatbelt: it’s risky, it looks bad, and it doesn’t give you any benefits. Search engines will rank HTTP-only pages lower than sites that allow secure connections. More importantly, you’re needlessly risking the security of your users’ data and most likely violating regulations like the GDPR.

If your site has any kind of login functionality, be sure to treat user data carefully. Use strong hashing functions to prevent attackers from stealing passwords if your server is compromised. Regardless of what your site does, follow security best practices on your server: use strong passwords, update server software frequently (and plugins, if applicable), use a correctly-configured firewall, and limit remote access over SSH and similar protocols.

It might be easy to forget about legal and compliance issues when your business is just getting off the ground, but a single violation could cost you a ton of time and money. Be sure to write a privacy policy that complies with regulations like the GDPR and CCPA for customers in the EU and California, and store user data appropriately. It’s far cheaper to get a lawyer involved while writing a privacy policy than it is to defend yourself from a lawsuit. As much as they are a nuisance, cookie consent messages are a requirement in many locations and are relatively easy to implement. Last but not least, be sure to adequately credit photographers and other content producers to avoid copyright hassles in the future.

Not every lead comes from a Google search. Social media marketing is especially important today, and it’s used by most successful sites to attract customers. Regardless of where it’s shared, good content marketing is also an important tool in your toolbox. Instead of just promoting your service, content marketing like articles and videos also offer something of value to viewers. After learning something useful, they will be interested in checking out your product. You can use A/B testing, where different visitors will be given different content, to gauge the effectiveness of your marketing or any other element of your website.

Sometimes, however, you do need to resort to direct advertising. Marketing tools like Google AdWords let you put your ads right where your most interested customers are already looking.

An unreliable or broken site is certainly a source of frustration for visitors. Too many site owners fail to implement a good backup policy, aren’t immediately notified when their site goes down, and don’t keep everything up-to-date and secure. Cloud providers like AWS and GCP offer easy ways to save snapshots of your servers, preventing you from losing everything if something goes haywire. Try a service like UptimeRobot to get a message as soon as your site goes down. You don’t want to find out that your site doesn’t work when customers start calling you.

As much as a good visual design is an important part of creating a successful website, everything under the skin is equally important. Keeping your site easy to find and use, fast and secure, and in compliance with regulations is less obvious than a pretty façade, but these things are just as important if you want to retain your customers and attract new ones.

These days, most website owners are keenly aware of the important role that high quality content plays in getting noticed by Google. To that end, businesses and digital marketers are spending increasingly large amounts of time and resources to ensure that websites are spotted by search engine robots and therefore found by their target audiences.

But while every website owner wants high search engine rankings and the corresponding increases in traffic, there are certain areas of a site that are best hidden from the search engine crawlers completely.

You might wonder why it’s good to keep crawlers from indexing parts of your website. In short, it can actually help your overall rankings. If you’ve spent lots of time, money and energy crafting high quality content for your audience, you need to make sure that search engine crawlers understand that your blog posts and main pages are much more important than the more “functional” areas of your website.

Here are a few examples of web pages that you might want the search robots to ignore:

As you can see, there are plenty of instances where you should be actively dissuading search engines from listing certain areas of your site. Hiding these pages helps to ensure that your homepage and cornerstone content gets the attention it deserves.

So how can you instruct search engine robots to turn a blind eye to certain pages of your website? The answer lies in noindex, nofollow and disallow. These instructions allow you define exactly how you want your website to be crawled by search engines.

Let’s dive right in and find out how they work.

As you can probably imagine, adding a noindex instruction to a web page tells a search engine to “not index” that particular area of your site. The web page will still be visible if a user clicks a link to the page or types its URL directly into a browser, but it will never appear in a Google search, even if it contains keywords that users are searching for.

The noindex instruction is typically placed in the <head> section of the page’s HTML code as a meta tag:

<meta name="robots" content="noindex">

It’s also possible to change the meta tag so that only specific search engines ignore the page. For example, if you only want to hide the page from Google, allowing Bing and other search engines to list the page, you’d alter the code in the following way:

<meta name="googlebot" content="noindex">

A bit more difficult to configure and therefore less often used is delivering the noindex instruction as part of the server’s HTTP response headers:

HTTP/2.0 200 OK

…

X-Robots-Tag: noindex

These days, most people build sites using a content management system like WordPress, which means you won’t have to fiddle around with complicated HTML code to add a noindex instruction to a page. The easiest way to add noindex is by downloading an SEO plugin such as All in One SEO or the ever-popular alternative from Yoast. These plugins allow you to apply noindex to a page by simply ticking a checkbox.

Adding a nofollow instruction to a web page doesn’t stop search engines from indexing it, but it tells them that you don’t want to endorse anything linked from that page. For example, if you are the owner of a large, high authority website and you add the nofollow instruction to a page containing a list of recommended products, the companies you have linked to won’t gain any authority (or rank increase) from being listed on your site.

Even if you’re the owner of a smaller website, nofollow can still be useful:

Even if your pages only contain internal links to other areas of your website, it can be useful to include a nofollow instruction to help search engines understand the importance and hierarchy of the pages within your site. For example, every page of your site might contain a link to your “Contact” page. While that page is super important and you’d like Google to index it, you might not want the search engine to place more weight on that page than other areas of your site, just because so many of your other pages link to it.

Adding a nofollow instruction works in exactly the same way as adding the noindex instruction introduced earlier, and can be done by altering the page’s HTML <head> section:

<meta name="robots" content="nofollow">

If you only want certain links on a page to be tagged as nofollow, you can add rel="nofollow" attributes to the links’ HTML tags:

<a href="https://www.example.com/" rel="nofollow">example link</a>

WordPress website owners can also use the aforementioned All in One SEO or Yoast plugins to mark the links on a page as nofollow.

The last of the instructions we are discussing in this blog post is “disallow.” You might be thinking that this sounds a lot like noindex, but while the two are very similar, there are slight differences:

As you can see, disallowing a page means that you’re telling the search engine robots not to crawl it all, which signifies that it has no use at all for SEO. Disallow is best used for the pages on your site that are completely irrelevant to most search users, such as client login areas or “thank you” pages.

Unlike noindex and nofollow, the disallow instruction isn’t included into a page’s HTML code or HTTP response, but instead is included in a separate file named “robots.txt.”

A robots.txt file is a simple plain text file that can be created with any basic text editor and sits at the root of your site (www.example.com/robots.txt). Your site doesn’t need a robots.txt for search engines to crawl it, but you will need one if you want to use the disallow directive to block access to certain pages. To do that, you’ll simply list the relevant parts of your site on the robots.txt file like this:

User-agent: *

Disallow: /path/to/your/page.html

WordPress website owners can use the All in One SEO plugin to quickly generate their own robots.txt file without the need to access the content management system’s underlying file structure directly.

Do you know for certain which parts of your website are marked as noindex and nofollow or are excluded from being indexed by a disallow rule? If you are not sure, you might consider taking an inventory and reviewing your past decisions.

One way to do such an inventory is to go to www.drlinkcheck.com, enter the URL of your site’s homepage, and hit the Start Check button.

Dr. Link Check’s primary function is to reveal broken links, but the service also provides detailed information on working links.

After the crawl of your site is complete, switch to the All Links report and create a filter to only show page links tagged as noindex:

You now have a custom report that shows you the pages that contain a noindex tag or have a noindex X-Robots-Tag HTTP header.

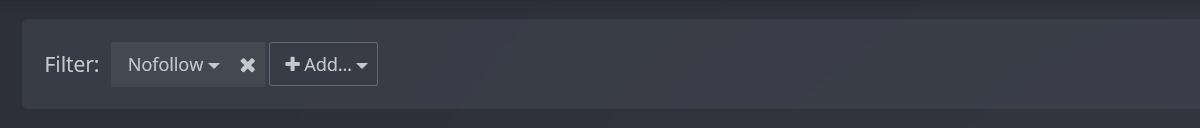

If you want see all links that are marked as nofollow, switch to the All links report, click on Add… in the filter bar, and select Nofollow/Dofollow from the drop-down menu.

By default, the Dr. Link Check crawler ignores all links disallowed by the rules found in the site’s robots.txt file. You can change that in the project settings:

Now switch the Overview report and hit the Rerun check button to start a new crawl with the updated settings.

After the crawl has finished, open the All Links, click on Add… in the filter section, and select robots.txt status to limit the list to links disallowed by your website’s robots.txt file.

While the vast majority of website owners are far more interested in getting the search engines to notice the pages of their websites, the noindex, nofollow and disallow instructions are powerful tools to help crawlers better understand a site’s content, and they indicate which sections of the site should be hidden from search engine users.

When building a website, it’s important to consider how easy it is for visitors to navigate to subpages from the homepage. The homepage, of course, will likely generate the most traffic. If visitors can’t easily navigate to lower-level pages from there, your website’s performance will suffer. You can help visitors access relevant subpages by improving your website’s click depth.

Also known as page depth, click depth refers to the total number of internal links, starting from the homepage, visitors must click through to access a given page on the same website. Each click adds another level of click depth to the respective page. The more links a visitor must click through to access a page, the higher the page’s click depth will be.

Your website’s homepage has a click depth level of zero. Any subpages linked directly from the homepage have a click depth level of one, meaning visitors must click a single internal link to access them from the homepage.

Click depth is a metric that affects user experience and, therefore, search rankings. Visitors typically want to access subpages easily, with as few links as possible. If a subpage requires a half-dozen or more clicks to access from the homepage, visitors may abandon your website in favor of a competitor’s site.

Google has confirmed that it uses click depth as a ranking signal. John Mueller, Senior Webmaster Trends Analyst at Google, talked about the impact of click depth during a Q&A session. According to Mueller, subpages with a low click depth are considered more important by Google than those with a high click depth. When visitors can access a subpage in just a few clicks from the homepage, it tells Google that the subpage is highly relevant. As a result, Google will give the subpage greater weight in the search engine results pages (SERPs).

An easy way to determine if a site suffers from high click depth is to run a crawl with Dr. Link Check. Even though Dr. Link Check’s primary function is to find broken links, the service can also be used for filtering links based on their depth:

Now you have a list of all internal page links with a click depth higher than five.

If the crawl revealed pages with a high click depth, it’s time to decide what to do about it. Here are five tips that will help you create a strategy for improving your site’s link structure.

The hierarchy of your website’s navigation menu will affect your site’s average click depth. If you use a broad hierarchy with just a few top-level categories and many lower-level categories, you can expect a higher average click depth. With this type of navigation, visitors must click through multiple category levels to access lower-level subpages, resulting in a higher average click depth.

Using a narrow hierarchy for your website’s navigation menu, on the other hand, promotes a lower average click depth. With a narrow hierarchy, your website’s navigation menu will have more top-level categories and fewer lower-level categories, which should allow visitors to access subpages in fewer clicks.

When creating articles, guides, blog posts or other content for your website, include internal links to relevant subpages. Without internal links embedded in content, visitors will have to rely on your website’s navigation menu to locate subpages. Internal links in content offer a faster way for visitors to find and access subpages, which helps keep your website from suffering with a high average click depth.

Keep in mind that internal links are most effective at improving click depth when published on subpages with a low click depth. You can add internal links to all your website’s subpages, but those published on subpages with a click depth level of one to three are most beneficial because they are close to the homepage.

Another way to improve your website’s click depth is to use breadcrumbs for supplemental navigation. What are breadcrumbs? In the context of web development, the term “breadcrumbs” refers to links in a user-friendly navigation system that shows visitors the depth of a subpage’s location in relation to the homepage. An e-commerce website, for instance, may use the following breadcrumbs on the product page for a pair of men’s jeans: Homepage > Men’s Apparel > Jeans > Product Page. Visitors to the product page can click the breadcrumb links to go up one or more levels.

Breadcrumbs shouldn’t be as a substitute for your website’s navigation menu. Rather, you should use them as a supplemental form of navigation. Add breadcrumbs to each subpage to show visitors where they are currently located on your website in relation to the homepage. You can add breadcrumbs manually, or if your website is built on WordPress, you can use a plugin to add them automatically. Yoast SEO and Breadcrumb NavXT are two popular plugins that feature breadcrumbs. Once they are activated, you can configure either of these plugins to automatically integrate breadcrumbs into your website’s pages and posts.

You can also use a visitor sitemap to lower your website’s average click depth. Not to be confused with search engine sitemaps, visitor sitemaps live up to their name by targeting visitors. Like search engine sitemaps, they contain links to all of a website’s pages, including the homepage and all subpages. The difference is that visitor sitemaps feature a user-friendly HTML format, whereas search engine sitemaps feature a user-unfriendly XML format.

After creating a visitor sitemap, create a site-wide link to somewhere in your website’s template, such as the footer. Once published, the visitor sitemap will instantly lower the click depth of most or all of your website’s subpages.

While optimizing your website for a lower average click depth can improve its performance, you shouldn’t overdo it. Linking to all your website’s subpages directly from the homepage won’t work. Depending on the type of website you operate, as well as its age, your site may have hundreds or even thousands of subpages. Linking to each one creates a messy and cluttered homepage without any sense of structure.

A high average click depth sends the message that your website’s subpages aren’t important. At the same time, it fosters a negative user experience by forcing visitors to click through an excessive number of internal links. The good news is you can lower your website’s average click depth by using a narrow hierarchy for the navigation menu, including internal links in content, using breadcrumbs and creating a visitor sitemap. These strategies will help you improve your site rankings as well as improve your visitors’ experience.

Links are the very foundation of the web. They connect web resources with each other and make it possible for visitors to navigate between pages and allow pages to reference images and other content.

Unfortunately, unlike diamonds, links are not forever. They have a tendency to break over time. Companies go out of business, servers are shut down, blog posts get deleted, domains expire… the web is dynamic, and there are lots of reasons why a link that works today might stop working tomorrow.

At best, a broken link is merely annoying and results in a poor user experience. At worst, it can pose a security threat to anyone visiting the website.

Imagine what could happen if Google shuts down their Analytics service and later lets the google-analytics.com domain expire. There would be millions of websites left with obsolete script code that attempts to load and run code from https://www.google-analytics.com/analytics.js. A third-party could snatch up the expired domain and serve malicious JavaScript code under this URL. This is one form of an attack called Broken Link Hijacking.

Broken Link Hijacking is an exploit in which an attacker gains control over the target of a broken link.

Typical candidates for link hijacking include:

Depending on how the hijacked link is embedded into the website’s code, there are different ways to exploit the vulnerability, with varying levels of risks.

If you have embedded an external script into your website (using code like this: <script src="https://example.com/script.js"></script>) and the link’s domain name gets taken over, an attacker can inject arbitrary code into the site.

You might ask what harm could come from some extra JavaScript code. The answer is plenty. Here are a few examples of how an attacker could exploit this vulnerability:

The possibility to execute attacker-supplied code basically makes this a Stored Cross-Site Scripting (XSS) vulnerability, which Bugcrowd classifies as a P2 (high risk) issue.

A hijacked link to an image (<img src="https://example.com/image.jpg">) or style sheet (<link href="https://example.com/styles.css" rel="stylesheet">) is not as bad as a hijacked script link, but can still have serious security implications:

background: url("https://example.net/hacked.gif")) and to inject text (body::before { content: "HACKED!" }).Attacks like these are often referred to as defacement or content spoofing and typically fall into Bugcrowd’s P4 (low risk) category.

It’s also worth noting that each request made to an attacker-controlled external server leaks information about both the website and the visitor. The attacker is able to track who visits the site (IP address, browser user-agent, referring website) and how often.

When you link to an external page from your site (<a href="https://example.com/">Link</a>), this link can be seen as a recommendation. You are indicating that the content of the page is relevant and worth a visit, otherwise you wouldn’t have included the link as part of your own content.

Gaining access to the target of the link allows an attacker to exploit the trust that your visitors give you and your recommendation in order to:

This is basically an impersonation attack. The attacker pretends that the linked website is legitimate and from a trusted source. Bugcrowd rates Impersonation via Broken Link Hijacking as P4 (low risk).

Subresource Integrity (SRI) allows you to ensure that linked scripts and style sheets are only loaded if they haven’t changed since the page was published. This is accomplished by computing a cryptographic hash of the content and adding it to the <script> or <link> element via the integrity attribute (as a base64-encoded string):

<script src="https://example.com/script.js" integrity="sha384-/u6/iE9tq+bsqNaONz1r5IjNql63ZOiVKQM2/+n/lpaG8qnTYumou93257LhRV8t" crossorigin="anonymous"><script>

Before executing a script or applying a style sheet, the browser compares the requested resource to the expected integrity hash value and discards it if the hashes don’t match.

By adding a Content-Security-Policy HTTP header to your server’s responses, you can restrict which domains resources can be loaded from:

Content-Security-Policy: default-src 'self' example.net *.example.org

In this example, resources (such as scripts, style sheets, images, etc.) may only be requested from the site’s own origin (self, excluding subdomains), example.net (excluding subdomains) and example.org (including subdomains). Requests to other origins are blocked by the browser.

A Content Security Policy doesn’t help when one of your trusted domains gets hijacked, but it does make sure that you don’t accidently embed resources from unexpected sources, whether that’s due to a simple typo or an obsolete link on an old and long-forgotten page.

Broken links happen. And when they do, it’s always better to know sooner than later, before an attacker might exploit the issue. Our link checker, Dr. Link Check, allows you to schedule regular scans of your website and notifies you of new link problems by email. Our crawler not only looks for typical issues like 404s, timeouts, and server errors, but also checks if links lead to parked domains.

Quite often, redirects are an early indicator that a link might break soon. When a website is redesigned and restructured, redirects are used to map the old URL structure to the new one. This typically works fine for the first redesign, but with each new restructure, the redirect chains get longer and longer, with more potential breaking points. It’s therefore advisable to keep a close eye on redirected links and update them if necessary.

In order to identify redirects on your website, run a scan in Dr. Link Check and click on one of the items in the Redirects section of the Overview report to see the details.

A broken external link doesn’t just disrupt the visitor experience; it can also have serious security implications. An attacker might be able to hijack the broken link and gain control over the link’s target. In the worst case, this can lead to an account takeover and the theft of sensitive data.

Using modern browser security features like Subresource Integrity and Content Security Policy you can mitigate these risks. Regular crawls with a broken link checker help you identify broken links early and reduce the attack surface.