FTP, short for File Transfer Protocol, is an old standard for transferring files from one computer to another. The protocol was first proposed in 1971, long before the advent of the modern TCP/IP-based internet. In spite of its age, FTP is still commonly used, and hundreds of thousands of websites link to files stored on FTP servers (using URLs that start with ftp://).

Until recently, that wasn’t an issue. All major browsers had built-in support for FTP and were able to handle ftp:// links. This situation is changing. The developers of Chrome disabled FTP in version 88 (released on January 19, 2021), and it’s likely that other browsers will follow suit.

The rationale behind this decision is that FTP in its original form is an insecure protocol that doesn’t support encryption. This is understandable, but practically, it breaks existing ftp:// links for the majority of users.

If you want to make sure that your website is free of ftp:// links, follow the steps below.

Go to https://www.drlinkcheck.com/, enter the URL of your website, and press the Start Check button.

Wait until the check is complete and the website is fully crawled. The number of found ftp:// links is displayed in the Link Schemes section. If there are no ftp: items in the list, the crawler didn’t locate any ftp:// links on your site and you are all good and can skip the rest of the post.

Click the ftp: item under Link Schemes to get to the list of ftp:// links and review each item in the list. If you hover over a link and hit the Details button, you can see which pages contain the link (under Linked from). A click on Source will show you the exact location in the HTML source code.

Now it’s time to decide what to do with the found links. Here are a few options:

https:// URL you can link to.Forty years after its introduction, FTP is slowly being phased out as a protocol for serving files on the internet. With major browsers dropping support for FTP, now is a good time to clean up your website and get rid of all FTP links. You surely don’t want your website to appear outdated and broken.

Links are one of the most important factors in how search engines determine the ranking of a website. If you place a link from your website to another, search engines consider this a vote for the relevance and quality of the linked content. Just like a recommendation to friends or family in real life, a link is something that you put your good name behind.

If you don’t want to endorse a link, adding rel="nofollow" tells search engines to ignore it when ranking pages. This makes sense for sites that you want your visitors to warn about (like a scam or a hack), user-generated links that you haven’t reviewed yet (like in the comments section of a blog), or links in ads that you were paid for placing on your website.

Considering the importance of marking links as nofollow (or not), it’s a good idea to periodically check if all your outbound links are correctly qualified. With a small website of only a few pages, you may be able to do this by hand, but larger sites require an automated solution. This is where Dr. Link Check comes into play. Our service is not only a great broken link checker, but can also give you an inventory of all the links on your website.

Head to the Dr. Link Check homepage, enter the address of your website, and click on Start Check.

While crawling your website, Dr. Link Check displays the number of found Dofollow and Nofollow links under Dofollow/Nofollow on the Overview page. Click on Nofollow to list the found links.

As you are probably only interested in links pointing to other websites, limit the report to outbound links by clicking the Add button in the Filter bar on the top and selecting Direction from the menu.

The list of links might also contain links found in image (<img src=...>) or script (<script src=...>) tags. If you want to restrict the report to normal page links, click again on Add and select Link type from the filter menu.

With these two filters, you've now identified all outbound nofollow links on your website. If you want to see all dofollow links instead, simply click on Nofollow in the filter bar and select Dofollow from the menu.

If you have a paid subscription (Standard plan and above), you can create rules for which links to follow and which links to ignore:

While previously a rule could only be based on the URL of a link (example: Url ENDSWITH ".gif"), it’s now also possible to include or exclude links based on where they are found in the HTML code. As an example, the following exclude rule makes our crawler ignore all <a> tags found inside HTML elements that have class="footer":

HtmlElement = ".footer a"

If you have worked with CSS before, the syntax should already be familiar to you. The table below lists the supported ways of selecting HTML elements:

| Selector | Description |

|---|---|

.class |

Selects all elements that have the specified class |

#id |

Selects an element based on the value of its ID attribute |

element |

Selects all elements that have the specified tag name |

[attr] |

Selects all elements that have an attribute with the specified name |

[attr=value] |

Selects all elements that have an attribute with the specified name and value |

[attr~=value] |

Selects all elements that have an attribute with the specified name and a value containing the specified word (which is delimited by spaces) |

[attr|=value] |

Selects all elements that have an attribute with the specified name and a value equal to the specified string or prefixed with that string followed by a hyphen (-) |

[attr^=value] |

Selects all elements that have an attribute with the specified name and a value beginning with the specified string |

[attr$=value] |

Selects all elements that have an attribute with the specified name and a value ending with the specified string |

[attr*=value] |

Selects all elements that have an attribute with the specified name and a value containing the specified string |

* |

Selects all elements |

A B |

Selects all elements selected by B that are inside elements selected by A |

A > B |

Selects all elements selected by B where the parent is an element selected by A |

A ~ B |

Selects all elements selected by B that follow an element selected by A (with the same parent) |

A + B |

Selects all elements selected by B that immediately follow an element selected by A (with the same parent) |

A, B |

Selects all elements selected by A and B |

Equipped with this knowledge, it’s possible to construct quite powerful rules:

| Example | Matched HTML elements |

|---|---|

HtmlElement = "#search > a, #filter > a" |

<a> tags directly under elements with the IDs search or filter |

HtmlElement = "a[rel~=nofollow]" |

<a> tags with a rel="nofollow" attribute |

HtmlElement = "img[src$=.png]" |

<img> tags with an src attribute value ending in .png |

HtmlElement = ".comments *" |

Everything inside elements with a comments class |

HtmlElement = "head > link[rel=alternate][hreflang=en-us]" |

All <link rel="alternate" hreflang="en-us"> elements directly inside the <head> tag |

This feature has been in beta for a while and has proven to be quite a valuable addition. We hope you will find it as useful as we do. If you run into any problems or have a suggestion, please drop us a note.

As the owner of Dr. Link Check, a broken link checker app, I have a love-hate relationship with 404 pages. On the one hand, I do everything I can to help our customers find and fix broken links and keep website visitors from running into 404 errors. On the other hand, I truly enjoy a unique and creative 404 page that sets a positive tone and lightens the mood.

While there are still too many websites that use the plain and dull default pages that come with their web servers, some custom 404 pages are gorgeous masterpieces. These hidden error pages are often the places where web designers can show their creativity and express themselves outside the bounds of what was specified by corporate.

For this article, I modified our crawler to search the web for the most beautiful 404 pages. The crawler went through a total of 500,000 websites, reducing the number of candidates to a few thousand, which I then manually narrowed down to the 40 pages presented below.

I hope these designs will give you the inspiration you’ll need to create your own distinctive 404 page. Crafting a pretty error page will not only be fun, but also makes sense from a customer conversion point of view. An appealing 404 page design can take away some of the bitterness of the broken link that a user just encountered. There’s a good chance the user will be pleasantly surprised and stay on your site rather than immediately hitting the back button and never visiting you again.

Now, enough with the chit-chat and on to why you’re actually here: the 404 pages.

Compiling this list of 404 pages was a fun thing for me to do. I hope you enjoyed the article and found some inspiration for your own website projects. If you already have a unique 404 page that you’re proud of, or have designed one based on this article, please message me via Twitter @wulfsoft and tell me about it. I’d love to see your creations.

A slow website isn’t just frustrating for potential customers – it harms your search rankings and makes your site look unprofessional. Decreasing the number of HTTP requests that browsers make while loading one of your pages is an easy way to speed everything up without splurging on expensive hosting. There are plenty of tools you can use to figure out what kinds of requests your site is making and minimize the ones that aren’t needed.

An HTTP request is simply the way that a piece of content (an image or a stylesheet, for example) makes its way from your server to your users’ devices. When you load a page in your browser and something like an image needs to be loaded, your browser will make a request to the server hosting that file. Lots of small requests can take a long time to load compared to one larger request, because one round trip across the network often takes longer than the actual download.

Before you can reduce the number of requests that your website makes, you'll need to figure out where they’re coming from and how long each one takes. Conveniently, all major desktop web browsers offer developer tools that include network profiling functionality. To open DevTools in Chrome or Firefox, press F12 (or Cmd+Opt+I on macOS). You might be greeted with a face full of HTML tags and CSS selectors, but don’t be alarmed. Click the “Network” tab to open the network profiling tools.

Try opening another page on your site: you should see a list of requests, along with fields for, at least, status, domain, size, and time. In the waterfall chart at the right, you can see when each HTTP request started and finished loading.

To get a better idea of what a new visitor would experience when loading your website for the first time, try force-reloading your website (Ctrl+Shift+R or Cmd+Opt+R). This will prevent your browser from loading certain elements from its cache and force it to make actual HTTP requests for them. You can tell which requests were cached by seeing which rows say “memory cache” (Chrome) or “cached” (Firefox) in the “Size” or “Transferred” columns.

If mucking around in the DevTools simply isn’t your thing, you can use online services like Pingdom Speed Test to get most of the same information.

Now that you’ve figured out how many and what kinds of HTTP requests your site is making, you can get to work reducing them.

While most frontend frameworks will automatically minify and combine your JavaScript files for you, there might still be full-size scripts, stylesheets, and extra HTML lying around on your site that can easily be made smaller and combined. If you don’t have too many files to minify, you can paste each one into one of many online minifiers (just try searching for “minify js” on DuckDuckGo – they even have a tool built into the search results). Otherwise, the Autoptimize WordPress plugin can take care of that automatically.

While minifying code is critical for decreasing the amount of data browsers have to load, HTTP/2 makes it unnecessary to put all of your scripts and stylesheets into one file. With this newer HTTP standard, browsers can load multiple files in one request. HTTP/2 is faster in lots of other ways as well, so if you haven’t gotten around to updating your web server you should do so soon.

Redirect chains are when your site redirects to another page that in turn redirects to somewhere else. Besides using three or more requests to get one file, redirect chains make it harder for search engines to crawl your site and will decrease your rankings. It can be somewhat difficult to diagnose this issue just looking at the request statuses in the “Network” tab in DevTools, so some automated tools have the ability to detect redirect chains across every page on your website.

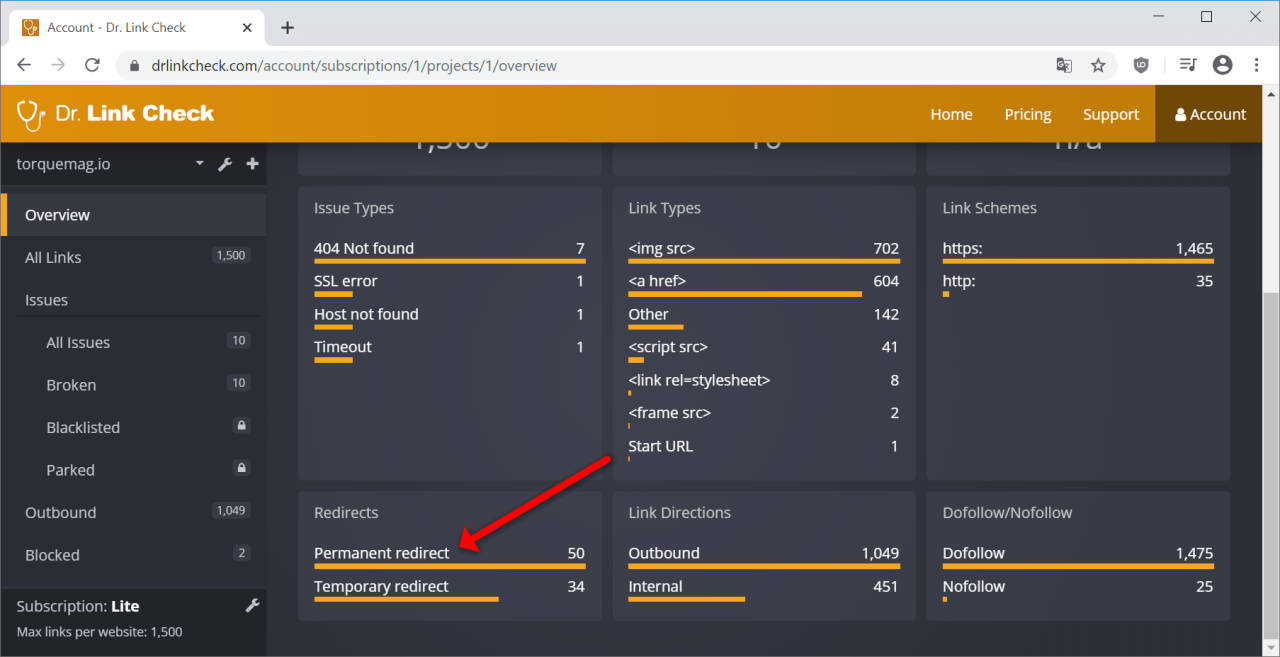

Dr. Link Check is primarily designed to find dead links and links that redirect to parked domains, but it can also find all the redirects and redirect chains by crawling your entire site. After running your website through Dr. Link Check, click on “Permanent redirect” in the “Redirects” section of the results.

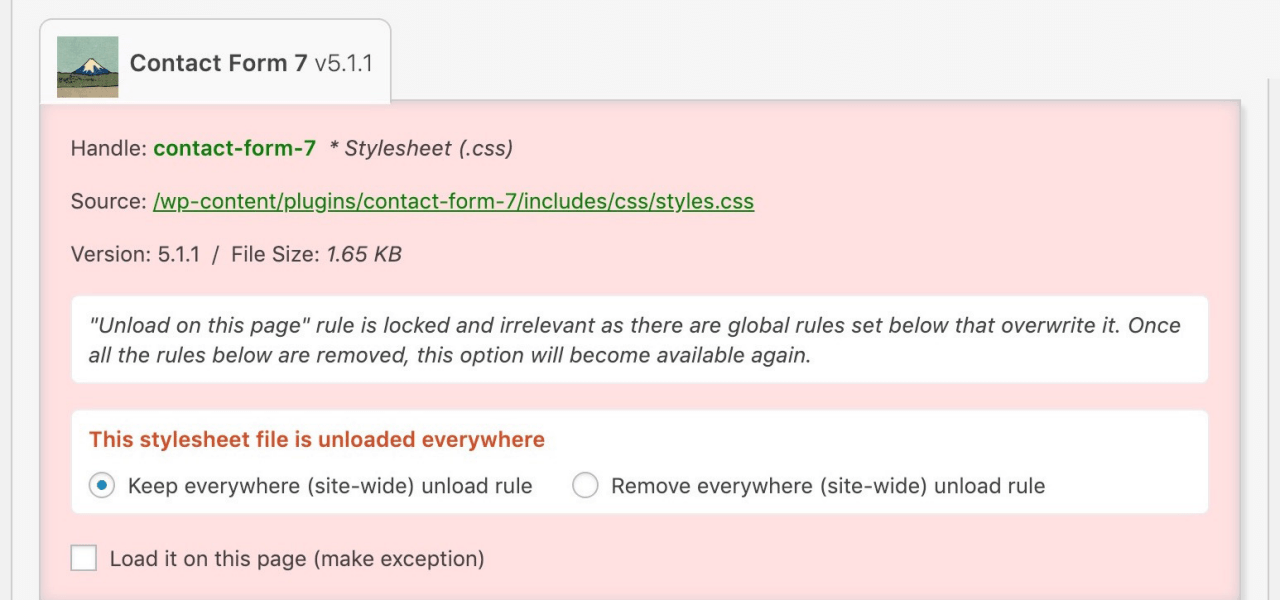

WordPress makes it easy to add plugins and scripts to extend your site’s functionality, but loading each of these assets on every single page makes for slow loading times and high server load. The Asset CleanUp plugin lets you select which exact assets (scripts and stylesheets) load on each page, so you aren’t loading the CSS or JavaScript for a specific part of one page on every other page as well.

For example, without Asset CleanUp, your site might be forcing users’ browsers to download the scripts for a Contact Us widget on every single page, even if that widget is only actually being used on a single page. As you might expect, all those extra HTTP requests add up to make your website slower.

Your site might not just be making requests to your own server: external fonts, social media widgets, ads, and external images all load from other servers and can slow your website down significantly. While a fancy font from Google Fonts might look great, it also means that your website must load an additional file from another place on the web. If you still want to keep the font, think about disabling weights and variants that you aren’t currently using. It’s also recommended to avoid using external images. Hosting them yourself means that you aren’t at the mercy of another provider, and they can load much faster.

If you have long pages with lots of images on your site, loading all the images at once, even ones that the user can’t currently see, can take a while and cost you a lot of bandwidth. Instead, try lazy-loading your images using plugins like Smush or Optimole. This way, images only load once the user scrolls down to them instead of all loading when the page is opened. These plugins will also compress your images appropriately to make them load faster without compromising quality.

WordPress is not a static site generator, so whenever a user loads a page, the PHP process has to render that page just for that particular visitor. As you might expect, rendering the same exact page hundreds of different times is not very efficient. Plugins like LiteSpeed Cache and WP Fastest Cache allow WordPress to serve a page from cache instead of rendering it for each individual visitor, saving lots of time.

CDNs (content delivery networks) are services that let you offload some HTTP requests (usually images and other assets) to fast servers located near your users. These services usually also perform some caching themselves, decreasing the number of HTTP requests made to your origin server. By configuring your site to proxy through a CDN like Fastly or KeyCDN, big assets will be served by the CDN’s servers instead of yours.

A common cause of a slow website is an abundance of unnecessary HTTP requests. It’s easy to find out where requests are coming from with the developer tools built into your browser. Given that each request takes a certain amount of time to be returned, you can make your site much faster by reducing the total number of requests, reducing redirect chains and external requests, lazy-loading images, and caching content on your server and on a CDN. Even if you have already taken care of most of the low-hanging fruit, it’s good to occasionally review the requests your site is making whenever it feels slower than usual.

Have you ever wondered if you can post content to your WordPress blog using your phone or tablet? The answer is yes, but you need XML-RPC enabled on the WordPress blog. If you read about cyber security and WordPress, you might come across the idea that XML-RPC is a security threat and it should be disabled. Here are some facts to help you decide.

XML-RPC is a remote protocol that works using HTTP(S). The remote procedure call (RPC) protocol uses XML to transport data to and from the WordPress blog. XML-RPC isn’t for WordPress only. You can find other applications that use it, although it is an older protocol not used much in newer applications. XML is widely replaced by JSON, but WordPress is an old platform that still uses many traditional protocols and procedures.

When you log into your WordPress account, you use regular HTTP and form submissions. XML-RPC allows you to log in using something other than a web browser and perform any action allowed by the WordPress API. You can create and edit posts, review tags and categories, read and post comments, and even get statistics on your blog. If you have a mobile app that does any of these actions, it probably uses XML-RPC.

Before you disable anything on your WordPress blog, you should understand what it’s used for. Disabling the wrong protocol or service can leave your blog unusable by certain applications and other parts might not be visible to your users.

XML-RPC is needed if you want to remotely publish any content. Suppose that you have an application on your tablet that allows you to write ideas in an application and upload them to your blog. You create new posts based on these ideas, but you leave them as a draft until you can write better and more thorough content. For the application to communicate with your blog, you need XML-RPC.

The plugin Jetpack is one of the most common applications that requires XML-RPC to be enabled. Jetpack is a popular plugin for site analytics and sharing on social media sites such as Facebook, Twitter, LinkedIn, and Reddit. It also lets you communicate with your blog using WhatsApp. Jetpack has several features, and many of them rely on XML-RPC. Once you disable the protocol, the plugin no longer functions properly.

The negative issues that come with XML-RPC are related to cyber security. It should be noted that WordPress has worked long and hard on combating cyber security issues related to its API and any remote procedures. For the most part, any cyber security issues have been patched. Make sure you look at the date before judging any WordPress feature based on published reports. A cyber security issue could have been a problem years ago, but it only takes WordPress a few months before the issue is patched.

The main issues that come with XML-RPC are DDoS attacks and brute force attacks on the administration password. You can still combat these issues by downloading cyber security plugins such as WordFence or Sucuri.

DDoS attacks using XML-RPC are mostly on the pingback system. When your article is mentioned and you have pingbacks enabled, the remote site sends your WordPress blog an alert. This alert is called a pingback, and you can get thousands of them a day when an article goes viral. If an attacker wants to DDoS your WordPress blog, they can flood your site with pingbacks until it can’t handle them anymore. The result is a crash on your site, which makes it unavailable to readers.

You can stop DDoS attacks using Akismet, an anti-spam tool that blocks spam comments until you review them. It’s even included with the WordPress installation, so you just need to sign up and receive an API key to use the software. Akismet should be included on any blog unless you have a better anti-spam tool for your WordPress comment system.

The second type of attack is brute-force attacks on the administrator username and password. The most common attempts to brute force a password are to guess the administrator password. Before you can guess the password, you need the administrator’s username. The default is “administrator,” so brute force scripts use this account name first. They then try the name of your blog. These two account names are common on most blogs, and it’s a mistake to use either name for your administrator account.

A brute force is an attack where the hacker takes a list of dictionary terms and runs them in a script that attempts to log in to your site. If the first attempt fails, it continues with the next one, and then the next one and then the next until all “guesses” expire. If given enough time, the attacker can guess your password and log into your administration dashboard.

The best way to defend against a brute-force attack is to block an IP after a certain amount of login attempts. You can do this using the plugin WordFence. It allows for a certain number of login attempts (usually 5) and then blocks the IP from any more attempts for several minutes. The attacker can still try, but the time it takes to find a password is too long when several hours of the day are blocked.

When you give a password to your administrator account, use a complex one. It should be at least 10 characters and use upper and lowercase letters and special characters.

If you know that no plugins or apps use the protocol, then disabling XML-RPC is an option. It’s recommended that you disable it only if you don’t use it, but it could be a problem in the future. If you’ve forgotten that it’s disabled and try to work with a plugin that needs it, you could get frustrated with the plugin’s issues until you realize that the fault lies with XML-RPC being disabled.

WordPress has also fixed many of the issues with this protocol, so it doesn’t carry the negative cyber security issues that it used to. You can keep the protocol on to ensure that you can remotely work with your WordPress blog, as long as you have at least one cyber security plugin installed that blocks pingback DDoS attacks and brute-force scripts.

By the way, don’t forget to check out Dr. Link Check and run a free broken link check on your WordPress blog.