HTTP redirects are a way of forwarding visitors (both humans and search bots) from one URL to another. This is useful in situations like these:

HTTP is a request/response protocol. The client, typically a web browser, sends a requests to a server and the server returns a response. Below is an example of how Firefox requests the home page of the drlinkcheck.com website:

In this example, the server responds with a 200 (OK) status code and includes the requested page in the body.

If the server wants the client to look for the page under a different URL, it returns a status code from the 3xx range and specifies the target URL in the Location header.

HTTP status codes 301 and 308 are used if a resource is permanently moved to a new location. A permanent redirect is the right choice when restructuring a website or migrating it from HTTP to HTTPS.

The difference between code 301 and 308 is in the details. If a client sees a 308 redirect, it MUST repeat the exact same request on the new location, whereas the client may change a POST request into a GET request in the case of a 301 redirect.

This means that, if a POST with a body is made and the server returns a 308 status code, the client must do a POST request with the same body to the new location. In the case of a 301 status code, the client may do this but is not required to do so (in practice, almost all clients proceed with a GET request).

The problem with HTTP status code 308 is that it’s relatively new (introduced in RFC 7538 in April 2015) and, therefore, it is not supported by all browsers and crawlers. For instance, Internet Explorer 11 on Windows 7 and 8 doesn’t understand 308 status codes and simply displays an empty page, instead of following the redirect.

Due to still limited support of 308, the recommendation is to always go with 301 redirects, unless you require POST requests to be redirected properly and are certain that all clients understand the 308 response code.

The 302, 303, and 307 status codes indicate that a resource is temporarily available under a new URL, meaning that the redirect has a limited life span and (typically) should not be cached. An example is a website that is undergoing maintenance and redirects visitors to a temporary “Under Construction” page. Marking a redirect as temporary is also advisable when redirecting based on visitor-specific criteria such as geographic location, time, or device.

The HTTP/1.0 specification (released in 1996) only included status code 302 for temporary redirects. Although it was specified that clients are not allowed to change the request method on the redirected request, most browsers ignored the standard and always performed a GET on the redirect URL. That’s the reason HTTP/1.1 (released in 1999) introduced status codes 303 and 307 to make it unambiguously clear how a client should react.

HTTP status code 303 (“See Other”) tells a client that a resource is temporarily available at a different location and explicitly instructs the client to issue a GET request on the new URL, regardless of which request method was originally used.

Status code 307 (“Temporary Redirect”) instructs a client to repeat the request with another URL, while using the same request method as in the original request. For instance, a POST request must almost be repeated using another POST request.

In practice, browsers and crawlers handle 302 redirects the same way as specified for 303, meaning that redirects are always performed as GET requests.

Even though status codes 303 and 307 were standardized in 1999, there are still clients that don’t implement them correctly. Just like with status code 308, the recommendation, therefore, is to stick with 302 redirects, unless you need a POST request to be repeated (use 307 in this case) or know that intended clients support codes 303 and 307.

When Google sees a permanent 301 or 308 redirect, it removes the old page from the index and replaces it with the page from the new location. The question is how this affects the ranking of the page? In this video, Matt Cuts explains that you lose only “a tiny little bit” of link juice if you do a 301 redirect. Therefore, permanent redirects are the way to go if you want to restructure your site without negatively affecting its Google rankings.

Temporary redirects (status codes 302, 303, and 307) on the other hand are more or less ignored by Google. The search engine knows that the redirect is just of temporary nature and keeps the original page indexed without transferring any link juice to the destination URL.

Another aspect to consider when using HTTP redirects is the performance impact. Each redirect requires an extra HTTP request to the server, typically adding a few hundred milliseconds to the loading time of the page. This is bad from a user experience perspective and puts unnecessary stress on the web server. While a single redirect doesn’t hurt too much, redirect chains in which one redirect leads to another redirect should definitely be avoided.

If you want to identify all redirects on your website, our link checker can help – just enter the URL of your website and hit the Start Check button. Once the check is complete, you will see the number of found temporary and permanent redirects under Redirects. Click on one of the items to get a list of all redirected links. If you hover over a link item and click the Details button, you can see the entire redirect chain.

Five different redirect status codes – no wonder many website owners get confused when it comes to redirects. My advice is the following:

I hope this sheds some light onto how HTTP redirects work and which HTTP status code to choose in which situation.

Our link checker heavily relies on AWS and EC2 instances in particular. One of the more difficult decisions when dealing with EC2 is choosing the right instance type. Will burstable and (potentially) cheap T3 instances do the job, or should you pay more for general purpose M5 instances? In this blog post, I will try to shed some light on this and provide answers to the following questions:

Without further ado, let me dive directly into some actual testing. I create a t3.large instance (without Unlimited mode) in the us-east-2 region and select Ubuntu 18.04 as the operating system. The first thing I check is the processor the machine is running on:

$ cat /proc/cpuinfo | grep -E 'processor|model name|cpu MHz'

processor : 0

model name : Intel(R) Xeon(R) Platinum 8175M CPU @ 2.50GHz

cpu MHz : 2500.000

processor : 1

model name : Intel(R) Xeon(R) Platinum 8175M CPU @ 2.50GHz

cpu MHz : 2500.000

As you can see from the output, the T3 instance has access to two vCPUs (= hyperthreads) of an Intel Xeon Platinum 8175M processor.

In order to test the performance of the processor, I install sysbench …

$ sudo apt-get install sysbench

… and start the benchmark (which stresses the CPU for ten seconds by calculating prime numbers):

$ sysbench --threads=2 cpu run

sysbench 1.0.11 (using system LuaJIT 2.1.0-beta3)

Running the test with following options:

Number of threads: 2

Initializing random number generator from current time

Prime numbers limit: 10000

Initializing worker threads...

Threads started!

CPU speed:

events per second: 1623.24

General statistics:

total time: 10.0012s

total number of events: 16237

Latency (ms):

min: 1.16

avg: 1.23

max: 9.89

95th percentile: 1.27

sum: 19993.46

Threads fairness:

events (avg/stddev): 8118.5000/0.50

execution time (avg/stddev): 9.9967/0.00

The events per second (1623 in this case) is the number you should care about. The higher this number, the higher the performance of the CPU. As points of reference, here are the results from the same test on several other cloud machines as well as some desktop and mobile processors:

| Instance Type | Processor | Threads | Burst | Baseline |

|---|---|---|---|---|

| t3.micro | Intel Xeon Platinum 8175M | 2 | 1654 | 175 |

| t3.medium | Intel Xeon Platinum 8175M | 2 | 1653 | 343 |

| t3.large | Intel Xeon Platinum 8175M | 2 | 1623 | 510 |

| m5.large | Intel Xeon Platinum 8175M | 2 | 1647 | 1647 |

| t3a.nano | AMD EPYC 7571 | 2 | 1510 | 75 |

| t2.large | Intel Xeon E5-2686 v4 | 2 | 1830 | 538 |

| a1.medium | AWS Graviton | 1 | 2281 | 2281 |

| t4g.micro | AWS Graviton2 | 2 | 5922 | 592 |

| m4.large | Intel Xeon E5-2686 v4 | 2 | 1393 | 1393 |

| c4.large | Intel Xeon E5-2666 v3 | 2 | 1583 | 1583 |

| GCP f1-micro | Intel Xeon (Skylake) | 1 | 956 | 190 |

| GCP e2-medium | Intel Xeon | 2 | 1434 | 717 |

| GCP n1-standard-2 | Intel Xeon (Skylake) | 2 | 1435 | 1435 |

| Linode 2GB | AMD EPYC 7501 | 1 | 1236 | 1170 |

| Hetzner CX11 | Intel Xeon (Skylake) | 1 | 970 | 860 |

| Dedicated Server | Intel Xeon E3-1271 v3 | 8 | 7530 | 7530 |

| Desktop | Intel Core i9-9900K | 16 | 19274 | 19274 |

| Laptop | Intel Core i7-8565U | 8 | 8372 | 8372 |

While bursting, T3 instances provide the same CPU performance as equally sized M5 instances, which answers my first question from above.

For the next test, I let the t3.large instance sit unused for 24 hours until it has accrued the maximum number of CPU credits.

T3 instances always start with zero credits and earn credits at a rate determined by the size of the instance. A t3.large instance earns 36 CPU credits per hour with a maximum of 864 credits. According to the AWS documentation, one CPU credit is equal to one vCPU running at 100% for one minute. So how long should my t3.large instance be able to burst to 100% CPU utilization?

If the instance has 864 CPU credits, uses 2 credits per minute, and refills its credits at a rate of 0.6 (= 36/60) per minute, it should have the capacity to burst for 864 / (2 - 0.6) = 617 minutes = 10.3 hours. Let me put that to a test.

sysbench --time=0 --threads=2 --report-interval=60 cpu run

Just as expected, the performance drops to about 30% (the baseline performance for t3.large instances) after about ten and a half hours.

T3 instances with activated Unlimited Mode are allowed to burst even if no CPU credits are available. This comes at a price: a T3 instance that continuously bursts at 100% CPU costs approximately 1.5 times the price of an equally sized M5 instance. However, how reliable is Unlimited Mode? My worry is that AWS puts too many instances on a single physical machine, so not enough spare burst capacity is available. To answer this question, I launch a t3.nano instance with Unlimited Mode and let it run at full steam for about four days.

As promised, there is no drop in CPU performance. The t3.nano instance delivers the full capacity of 2 vCPUs (almost) all the time. Quite impressive!

Instead of running my own network performance tests, I rely on the results that Andreas Wittig published on the cloudonaut blog. He used iperf3 to determine the baseline and burst network throughput for different EC2 instance types. Here are the values for different T3 instances and an m5.large instance:

| Instance Type | Burst (Gbit/s) | Baseline (Gbit/s) |

|---|---|---|

| t3.nano | 5.06 | 0.03 |

| t3.micro | 5.09 | 0.06 |

| t3.small | 5.11 | 0.13 |

| t3.medium | 4.98 | 0.25 |

| t3.large | 5.11 | 0.51 |

| m5.large | 10.04 | 0.74 |

Although an m5.large instance costs only about 15% more than a t3.large instance, it provides a 50% higher baseline throughput and more than double the burst capacity.

When determining how much network throughput you need, consider that EBS volumes are network-attached.

T3 instances are great! Even a t3.nano instance at a monthly on-demand price of less than $4 gives you access to the full power of two hyperthreads on an Intel Xeon processor and at burst runs as fast as a $70 m5.large instance. By activating Unlimited Mode, you can easily insure yourself against running out of CPU credits and being throttled.

If you don’t need the 8 GiB of memory (RAM) that an m5.large instance provides and can live with the lower network throughput, one of the smaller T3 instances with activated Unlimited Mode might be the much more cost-effective choice. In the end, it depends on how high the average CPU usage of your instance is. The table below lists the CPU utilizations up to which bursting T3 instances remain cheaper than an m5.large instance. Please note that the calculations are based on the on-demand prices and might be different when using reserved instances.

| Instance Type | Memory | Cost-effective if average CPU usage is less than |

|---|---|---|

| t3.nano | 0.5 GiB | 95.8% |

| t3.micro | 1 GiB | 95.6% |

| t3.small | 2 GiB | 95.2% |

| t3.medium | 4 GiB | 74.4% |

| t3.large | 8 GiB | 42.5% |

Last but not least, let me state that you shouldn’t rely solely on the benchmarks and comparisons in this post. You are welcome to use my finding as a first guide when choosing the right instance type, but don’t forget to run your own tests and make your own calculations.

The days of Flash are numbered. Chrome blocks Flash content by default since version 76 (released in July 2019), Firefox since version 69 (September 2019), and Google has announced it will stop indexing SWF files by the end of 2019. The final nail in the coffin will come later in 2020, when Adobe officially end-of-lifes the Flash Player.

According to W3Techs, only about 3 percent of all websites utilize Flash nowadays. This doesn’t sound like very much, but considering the huge number of websites on the web, it still means that millions of sites rely on Flash. If your website is among them, it’s definitely time to act!

The first step in migrating a website away from Flash is identifying the pages that need to be updated. In this post I will demonstrate how to use Dr. Link Check to crawl a website in order to find all SWF files and the pages linking to them.

Click here to navigate to the Dr. Link Check home page, enter the address of your website into the input field, and click the Start Check button.

The service immediately starts crawling through your website, which may take a while. Once the crawl is complete, click the All Links item in the sidebar on the left.

Click the Add button in the Filter bar, select URL from the drop-down menu, enter .swf into the input field, and press Enter to confirm the input.

The list will now only display links containing “.swf” in their URL. In order to see the pages linking to the Flash file, hover over the link and click the Details button.

Although Dr. Link Check is primarily a broken link checker, its flexibility also makes it an excellent tool for finding specific files on a website. You can not only use it to search for Flash, but also for Java, Silverlight, or any other type of content that’s identifiable by filename. Give it a try!

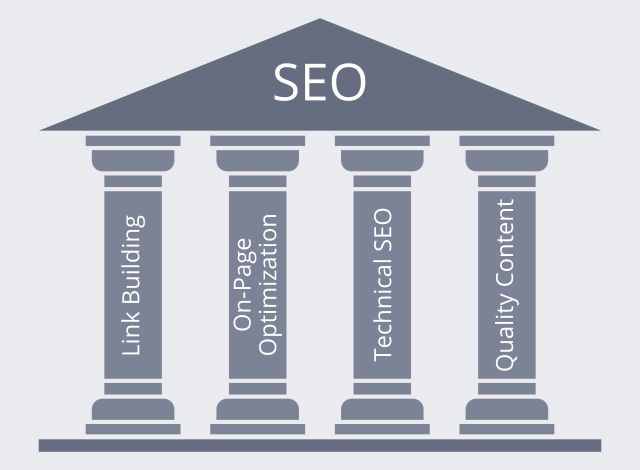

Social media may have been the star of the digital marketing scene in recent times, but reports of SEO’s demise are greatly exaggerated. Effective search engine optimization can still drive plenty of profitable traffic to a website, but it needs to be approached correctly. What do you need to consider when developing a successful modern strategy?

Links have always been the foundation of the Google algorithm. Although the specifics have changed over time, a good link will always be a positive for your ranking. But what should you be looking for when building links?

On-page optimization is all about getting your page’s ducks in a row to leave the algorithm with no doubt about its theme. Although it’s nowhere near the make-or-break factor it once was, it’s still vital.

Technical SEO is in some ways the ugly duckling of the optimization family. It’s rarely exciting, but it provides the foundation on which other parts of the discipline can build. In essence, it means ensuring your site is easily understood by the search engine algorithm, with no technical glitches or confusion to trip the spiders up. Here are the most important things to consider.

The final part of the optimization jigsaw is high-quality content. Search engines strive to direct users to genuinely useful sites, and if your content is poor, you won’t fit this criterion. Not only that, but high-quality content increases user engagement, and this feeds directly back into the ranking system via tracking through Google’s advertising, analytics, and social media platforms.

Modern online marketing offers countless ways of driving traffic to a website, but search engine optimization remains one of the most powerful and cost-effective. But it’s not something you can take for granted or approach half-heartedly. Pay attention to these four pillars, and you’ll be giving your SEO efforts an essential underpinning for profitable success.

We have added two new options to the Project Settings dialog that allow you to configure where and when to send notification emails about completed link checks. Previously, the results of a recurring check were always sent to the account holder’s email address. Now you can use different recipients for different projects.

In order to have the emails delivered to multiple recipients, simply enter the addresses separated by a comma. The first email address will be used for the email’s To field, the remaining addresses go to the Cc field.

If you are not interested in getting emails about checks that didn’t identify any dead or bad links, tick the checkbox next to Only send if issues were found. Dr. Link Check will then keep quiet until something worth reporting is found.

The majority of the top 1 million websites now use HTTPS, and for good reason:

Considering that SSL/TLS certificates don’t even cost you anything (see Let’s Encrypt for free certificates), there’s every reason to use HTTPS for a website.

When migrating an existing site from HTTP to HTTPS, it’s often difficult to find all the old http:// URLs that need to be changed to https://. Missing just a single link to an image, script, or other resource, results in a Mixed Content warning in the browser. In this post, I will demonstrate how to use Dr. Link Check for finding all http:// URLs on a website.

Go to the Dr. Link Check homepage, enter the address of your website, and click on Start Check.

After the check is complete, Dr. Link Check will display the number of http:// links under Link Schemes on the Overview page. Click the http: item to open a report listing the found links.

The report lists all http:// links found on your website, including those to other websites. If you want to restrict the report to internal links, click the Add button in the Filter bar at the top of the report, and select Direction from the menu. Now, click on Outbound and select the Internal menu item.

As presented above, Dr. Link Check is not only a great broken link checker, but it can also help you migrate your website from HTTP to HTTPS. Give it a try!